The Intelligence Factory: AI Infrastructure

Source: ARK Invest Big Ideas 2026

Disclaimer: This is not investment advice. This article examines ARK Invest's publicly available research report and stress-tests its claims. Nothing here should be taken as a recommendation to buy or sell any asset.

Big Ideas 2026, Part 2: The Intelligence Factory

The cost of AI inference dropped more than 99% in a single year. That should settle the infrastructure debate. It makes everything more complicated instead.

The Big Picture

ARK's AI infrastructure report starts with Jevons' Paradox.

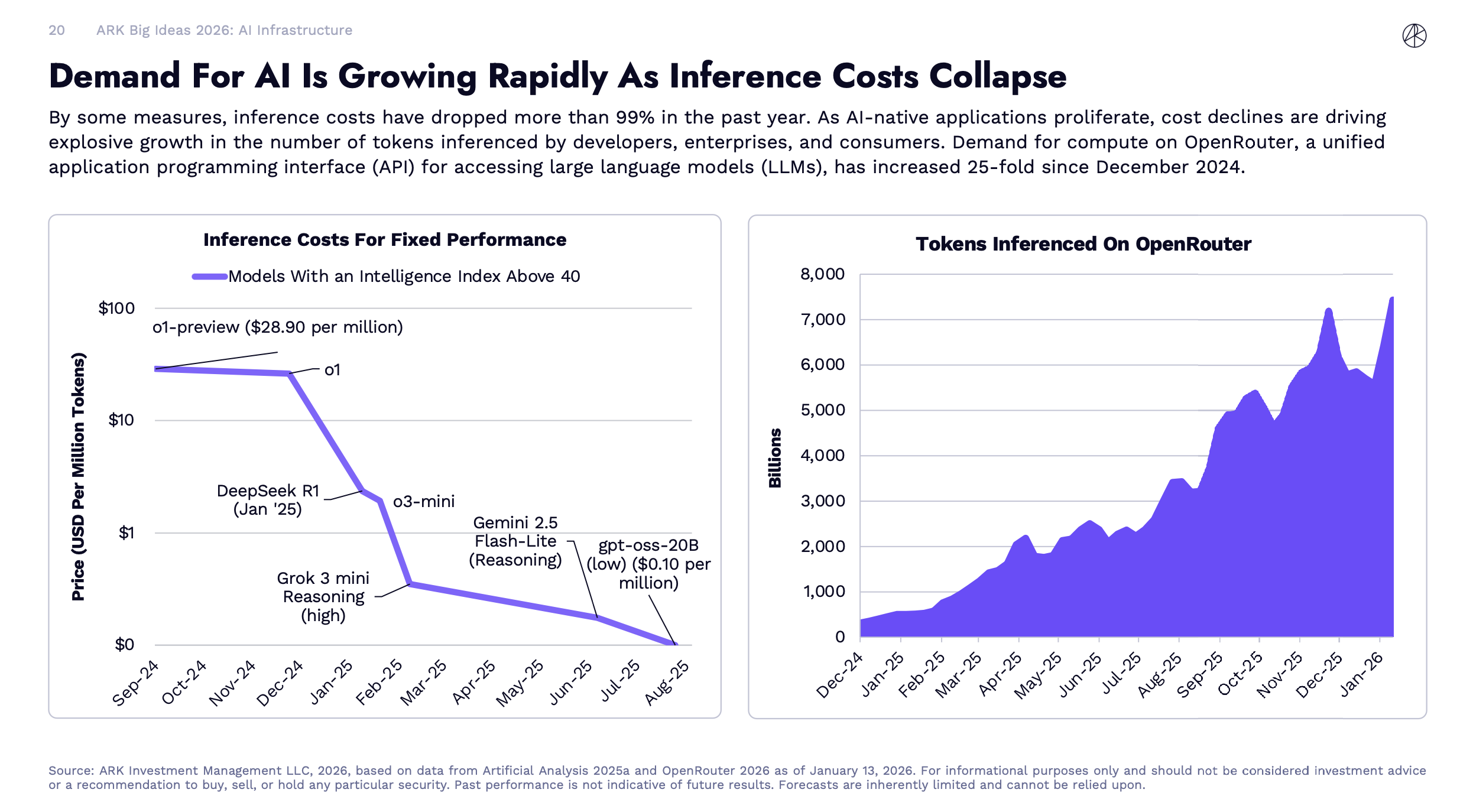

Inference costs have dropped more than 99% in the past year. As AI-native applications proliferate, cost declines are driving explosive growth in the number of tokens inferenced by developers, enterprises, and consumers.

Cheaper intelligence triggers Jevons' Paradox. The 19th-century economist William Stanley Jevons observed that more efficient steam engines did not reduce coal consumption. They made coal economical for new applications, and total consumption exploded. ARK is applying the same logic to AI, the cheaper each unit of intelligence gets, the more units get consumed. You do not save compute. You spend it on problems you never would have touched before.

Data Center

Data center capital investment is growing at 29% annually, ARK says, and will surpass $1.4 trillion cumulatively by 2030. Hyperscaler CapEx from the top five spenders (Amazon, Alphabet, Microsoft, Meta, and Oracle) is projected to exceed $500 billion in 2026 per ARK, with third-party estimates from IEEE ComSoc running even higher at $600 billion.

This is the structural bet behind the $1.4 trillion forecast. Not that AI gets more expensive. That AI gets so cheap it becomes infrastructure, like the way electricity or bandwidth became infrastructure, and total demand swamps any per-unit efficiency gains.

Whether that logic holds is the central question. ARK is not alone here. Goldman Sachs estimates data center power demand will rise 165% by 2030 compared to 2023 levels. The IEA reports AI-driven data centers already contribute roughly one-tenth of global electricity growth (and nearly one-fifth in advanced economies). The bet is widely held. That does not make it right, but this is not just ARK talking.

There's a Chinese idiom, 三人成虎, "three people claiming there's a tiger makes it feel real." The original warning is about false consensus, repeat something enough times and people believe it even if it's not true. But there's a flip side. When Goldman Sachs, the IEA, and ARK all point in the same direction, it's worth paying attention. Not because consensus equals truth, but because independent analysis converging on the same conclusion is structurally different from one firm talking its own book.

Get the next deep dive in your inbox

GPU Market (ok...My Favourite Part)

The GPU market is no longer a one-horse race, but the nuances matter.

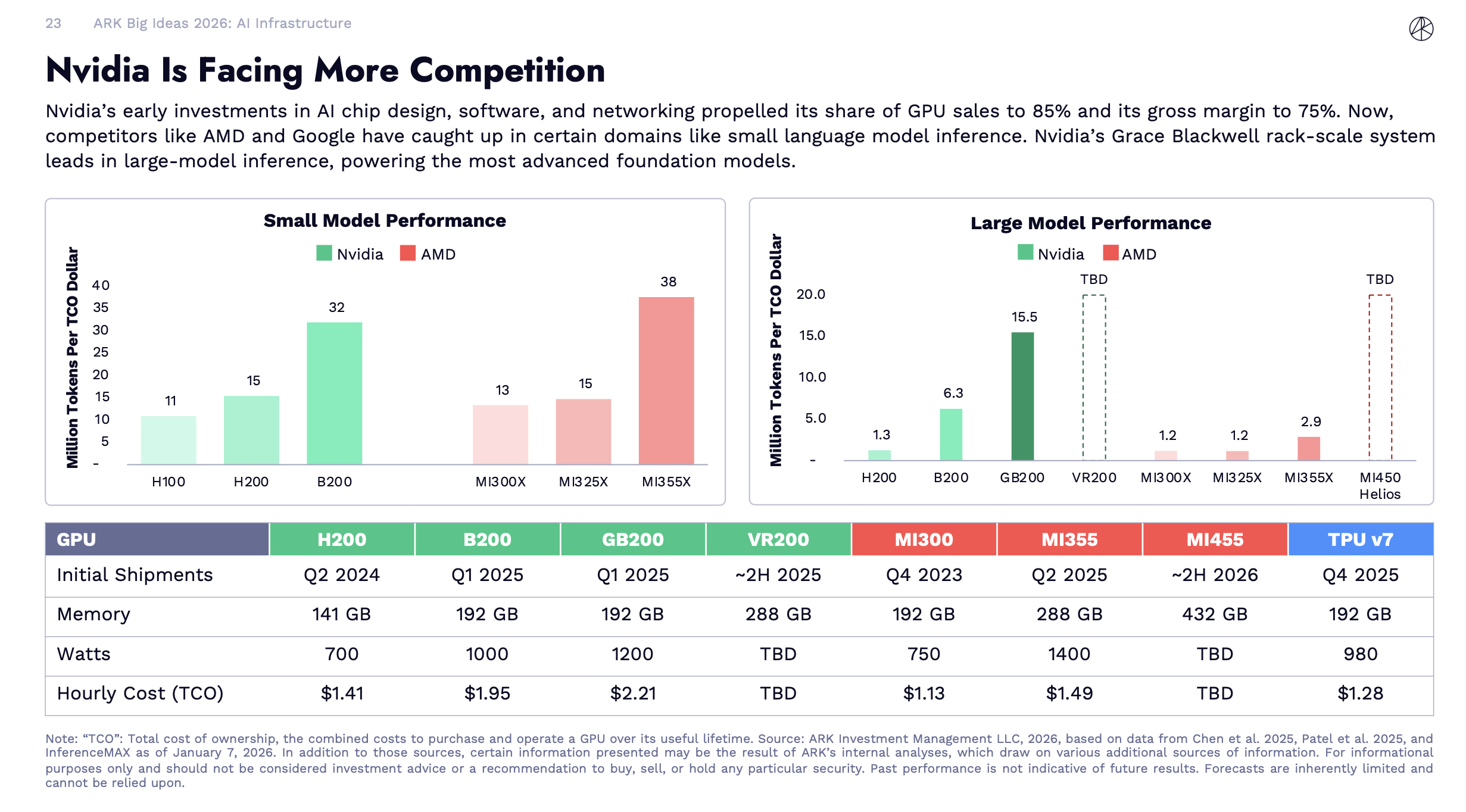

This chart says, on large-model inference (the workload that powers frontier models like GPT-4 class systems, where hyperscalers concentrate their biggest budgets), Nvidia's GB200 still leads AMD's MI355X by 5-12x on tokens per TCO dollar. That is a commanding lead on the workload that defines who wins the biggest contracts.

On small-model inference, the picture reverses. AMD's MI355X delivers 38 million tokens per TCO (TCO: Total cost of ownership, the combined costs to purchase and operate a GPU over its useful lifetime) dollar versus Nvidia's B200 at 32. AMD also has a meaningful price advantage on hourly TCO: $1.49 versus $2.21 for the GB200. For companies running smaller, specialized models at scale (which describes most enterprise deployments), AMD is now the rational choice on economics alone.

The catch: CUDA. Nvidia's software ecosystem remains its most durable moat. Most AI frameworks, training pipelines, and deployment tools are optimized for Nvidia hardware. Switching has real costs, not just in dollars but in engineering time. AMD has been chipping away at this with its ROCm stack for years. The gap is narrowing. It has not closed.

Meanwhile, all four major hyperscalers are building custom silicon. Google's TPUs. Amazon's Trainium and Inferentia. Microsoft's Maia. Meta's MTIA. Every in-house chip is one fewer Nvidia GPU purchased. As inference workloads standardize (a trend that favors commoditization), Nvidia's advantage in general-purpose flexibility starts to matter less.

Here's where it gets interesting. ARK's report briefly flags the idea of sending AI chips to orbit as part of the "Next Gen Cloud," but it does not go deep on the economics. External analysis fills in the picture. According to Scientific American, space-based data centers powered by solar could be significantly more energy-efficient than Earth-based equivalents, because solar irradiance is higher in orbit, with no clouds, no weather, and near-constant sunlight in sun-synchronous orbits. SpaceX's falling launch costs are making orbital infrastructure economically thinkable for the first time. Google has announced Project Suncatcher, solar-powered satellite constellations carrying TPUs, with a demonstration mission planned for 2027. Startups like Starcloud have already launched satellites with Nvidia H100 GPUs onboard.

To be honest, when I first heard about this, my reaction was: "Sure, you're making up stories again to pump your stock." But apparently this isn't science fiction — it's 2–5 years from being a real option for large-scale workloads.

The Bull and Bear Case

Bull case: Jevons' Paradox is real and the historical evidence is strong. Cheaper inference is creating entirely new demand categories, AI agents, always-on applications, embedded intelligence in devices that previously could not afford it. The total addressable market is expanding faster than any single supplier can serve. Even if Nvidia's market share slips from 85% to 60%, the pie grows from $200 billion to potentially $1.4 trillion. A smaller slice of a much larger pie still means enormous absolute revenue growth. The orbital data center thesis, if it plays out, creates a structural energy advantage that could benefit vertically integrated players for decades.

Get the next deep dive in your inbox

Bear case: ARK is projecting demand growth at a pace that requires everything to work simultaneously. Inference costs keep falling. New use cases emerge fast enough to absorb excess supply. Energy constraints get solved. Geopolitical risks (export controls, US-China AI competition) do not disrupt supply chains. Each of these is plausible individually. All of them being true at once is a heavier ask.

ARK's report largely treats the Nvidia growth story as a matter of absolute revenue, not relative valuation. That framing obscures the most important investment question. Nvidia's stock already embeds expectations of sustained high revenue growth (many analysts model 30-40% annually). If the AI chip market grows as ARK projects but Nvidia's share erodes faster than expected, or if gross margins compress from 75% toward something more competitive, revenue growth could be real while shareholder returns disappoint. The telecom parallel from Part 1 applies here too, fiber optic infrastructure really was built out, internet usage really did explode, and many infrastructure builders went bankrupt or generated terrible returns while the value flowed to end users.

Finally, the US-China dynamic adds an asymmetric risk that ARK's report acknowledges but does not fully integrate. China is investing heavily in its own AI stack, has open-sourced competitive models (DeepSeek, Qwen), and is building nuclear reactors at a pace the US cannot match. If the application layer is where competition gets decided, as ARK suggests, then the US infrastructure buildout is necessary but not sufficient.

So What: What This Means for You

- If you are an investor: The infrastructure thesis is real but the valuation question is separate from the demand question. Before treating any AI infrastructure company as a buy, ask whether its current stock price already assumes the best-case scenario. For Nvidia specifically, revenue growth and stock returns are not the same thing at current multiples.

- If you are in tech or career planning: Energy is the structural bottleneck that will shape where AI development happens for the next decade. Roles at the intersection of power engineering, data center operations, and AI workload optimization are going to be scarce and valuable.

- If you are just curious: The 99% inference cost drop in one year is the number worth holding onto. It is the mechanism that makes every other AI story in this series possible.

This is Part 2 of a 13-part series breaking down ARK Invest's Big Ideas 2026 report. Part 1 covered the convergence thesis.

Sources

- ARK Invest Big Ideas 2026. Primary source for all ARK projections, GPU benchmark tables, and inference cost data cited in this article.

- LLM inference prices have fallen rapidly but unequally across tasks (Epoch AI). Independent tracking of LLM inference price trends, showing roughly 10x annual cost reduction for equivalent performance.

- AMD vs NVIDIA Inference Benchmark: Who Wins? (SemiAnalysis). Detailed cost-per-million-tokens analysis comparing AMD and Nvidia GPU architectures.

- AI to drive 165% increase in data center power demand by 2030 (Goldman Sachs). Goldman Sachs Research quantifying the energy demand trajectory for AI data centers.

- Space-Based Data Centers Could Power AI with Solar Energy (Scientific American). Analysis of orbital data center energy efficiency and the technical challenges involved.

- Why the AI world is suddenly obsessed with Jevons paradox (NPR Planet Money). Accessible explainer on how Jevons' Paradox applies to the AI efficiency and demand dynamic.

- Hyperscaler capex exceeds $600 billion in 2026 (IEEE ComSoc Technology Blog). Data on hyperscaler capital expenditure projections for 2025 and 2026.